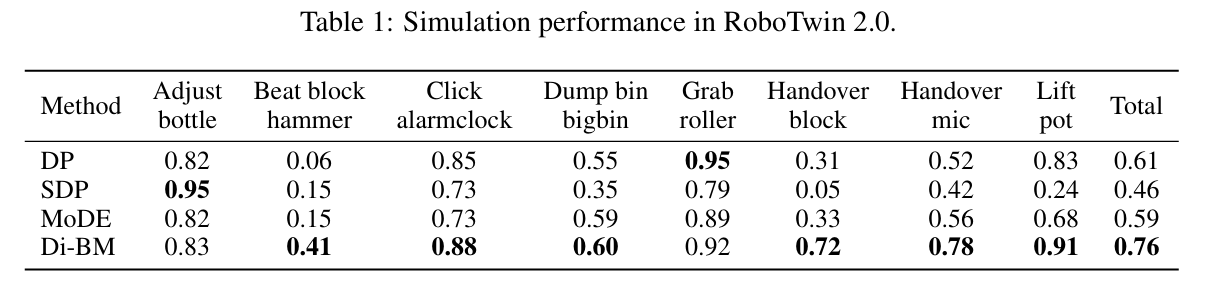

Imitation learning has demonstrated strong performance in robotic manipulation by learning from large-scale human demonstrations. While existing models excel at single-task learning, it is observed in practical applications that their performance degrades in the multi-task setting, where interference across tasks leads to an averaging effect. To address this issue, we propose to learn diverse skills for behavior models with Mixture of Experts, referred to as Di-BM. Di-BM associates each expert with a distinct observation distribution, enabling experts to specialize in sub-regions of the observation space. Specifically, we employ energy-based models to represent expert-specific observation distributions and jointly train them alongside the corresponding action models. Our approach is plug-and-play and can be seamlessly integrated into standard imitation learning methods. Extensive experiments on multiple real-world robotic manipulation tasks demonstrate that Di-BM significantly outperforms state-of-the-art baselines. Moreover, fine-tuning the pretrained Di-BM on novel tasks exhibits superior data efficiency and the reusable of expert-learned knowledge.

We conduct experiments in the RoboTwin simulator and present the visualizations here. The right side of the video displays the real-time variation in the selection probability of each expert as the task progresses. It can be observed that different experts are assigned to different stages of various tasks, demonstrating that they have mastered distinct skills.

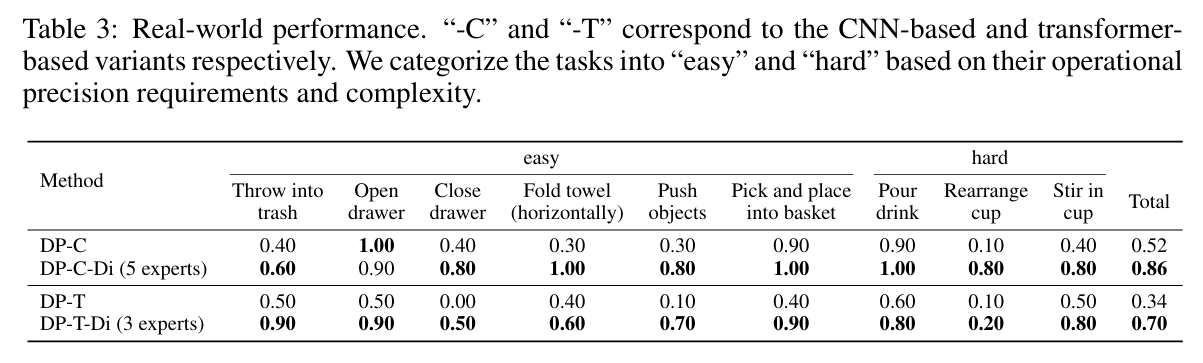

We conduct experiments in the real-world with Nova5 robot arm and evaluate our method on 9 real-world manipulation tasks.

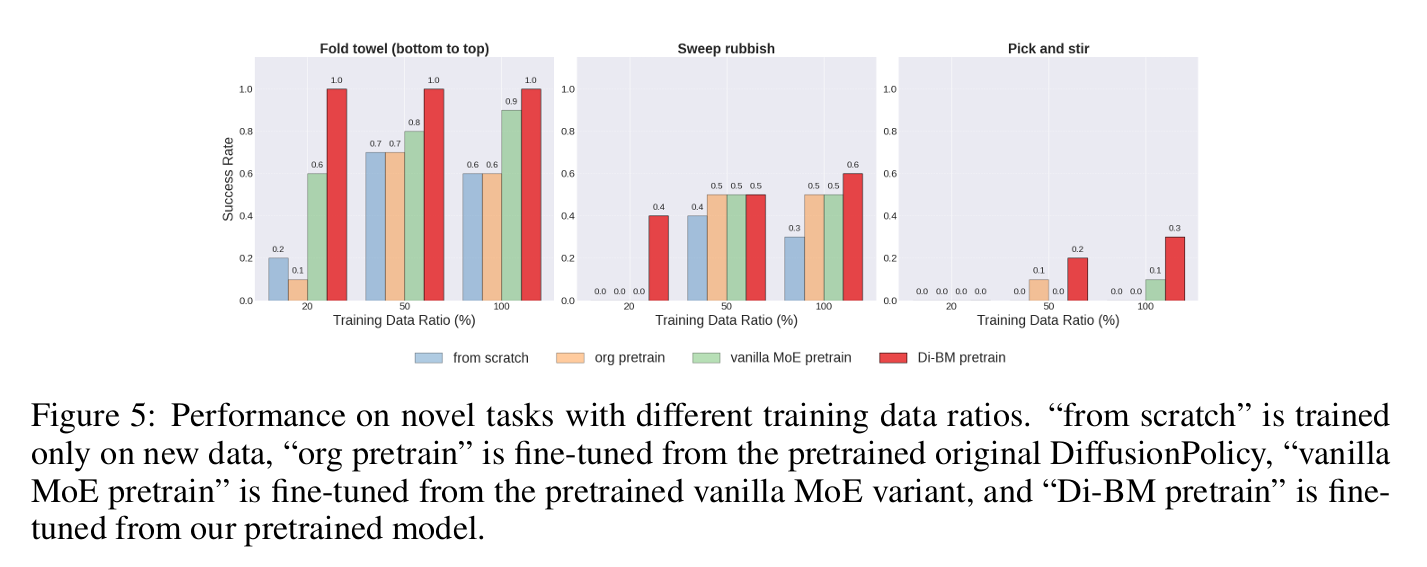

Building on the hypothesis that each expert in Di-BM specializes in a subset of primitive skills, we expect the pretrained Di-BM to adapt more efficiently to unseen tasks through post-training.

@misc{shen2026learningdiverseskillsbehavior,

title={Learning Diverse Skills for Behavior Models with Mixture of Experts},

author={Wangtian Shen and Jinming Ma and Mingliang Zhou and Ziyang Meng},

year={2026},

eprint={2601.12397},

archivePrefix={arXiv},

primaryClass={cs.RO},

url={https://arxiv.org/abs/2601.12397},

}